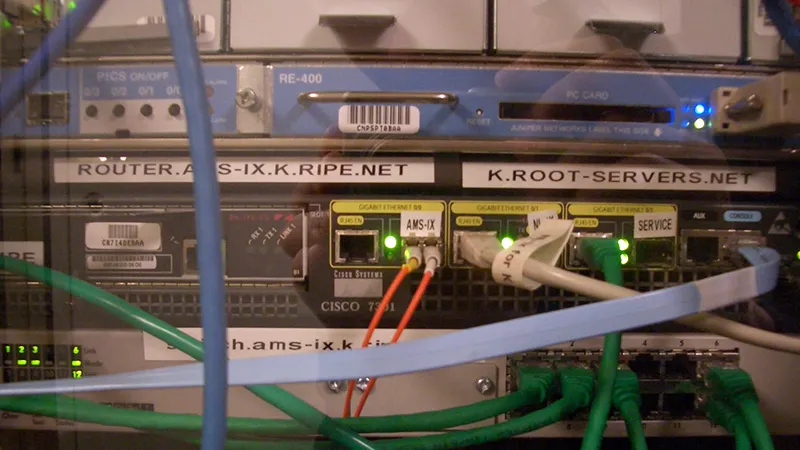

Does the US government really control the Internet? Ask a political scientist and you are likely to get a long and ambivalent response. A computer scientist would gamely field that question, and emphasise it’s not “whether” but “when” the US government took over the reins of cyberspace that matters. They would point to January 28, 1998, when Jon Postel, a network engineer at the University of Southern California (USC), asked the operators of “root servers” to redirect all Internet traffic through his computers. There are 13 “root servers” in the world — authoritative directories without which navigating to a website will be like finding a pin in the dark — and the operators of eight of them answered Mr. Postel’s call, directing traffic meant for an “official’ server in Virginia to the USC campus. The Virginia–based server, containing the master file of addresses, was run by Network Solutions Inc. on a contract with the US Department of Defense. In one fell swoop, the DoD's hold on the internet was stripped of all legitimacy. Mr. Postel’s brazen show of rebellion reflected the topography of the Internet: it could be controlled by no one, unless everyone recognised a single entity as the authoritative source of all IP addresses and names.

To remedy this imbalance of power, the US government immediately sought to institutionalise the running of the Internet. The names and numbers that make up the Web — domain names, read by humans, and IP addresses, read by machines — would be managed by a not–for–profit corporation called Internet Corporation for Assigned Names and Numbers or ICANN. It would be based in the United States, and loosely affiliated to the US Department of Commerce through a contract.

In 2016, that contractual relationship will be terminated, setting the stage for the “privatisation” of the Domain Name System (DNS).

After the Postel episode, the Bill Clinton administration also conferred itself with the sole power to approve changes to the root zone. The legwork is still done by Network Solutions Inc., now Verisign, but the stamp of authority must come from the US Department of Commerce. There is likely to be no change to this relationship in the near or distant future.

An alternative root zone

But a project to create an “alternative root” for the Internet, driven by technical considerations, may change the rules of this game. An influential group of computer scientists are setting up a test bed to reroute the Internet’s traffic. The project, named “Yeti”, is run jointly by the Beijing Internet Institute, a Japan–based group called the Widely Integrated Distributed Environment (WIDE), and respected computer scientists like Paul Vixie. They are not concerned about legal or political control of the Internet, but the dilemma of connecting the next generation of Internet users, which will mostly come from developing countries.

Every device and server connected to the Internet has an IPv4 or IPv6 address — sometimes both — which sends or receives packets of information. IPv4 addresses, used by an overwhelming majority of devices connected to the Internet today, are fast running out. In contrast, the IPv6 protocol allows for a lot more addresses, but relatively few devices use it. What’s more, an IPv6 address cannot connect directly with an IPv4 address and vice versa, raising the costs of interoperability.

This creates a problem when devices with IPv6 addresses try to connect to the Internet’s root servers. Every packet of information in cyberspace contains the IPv4 addresses of all 13 root servers. This is to ensure that at least one root server — should the others be unavailable — points the packet to its correct destination. Adding the longer IPv6 addresses of root servers, however, will exceed the packet’s capacity, making it impossible for IPv6 devices to connect directly to these authoritative directories. To solve this problem, computer scientists are faced with two alternatives: one, create an inefficient method to first translate IPv6 addresses of devices to IPv4 addresses, which can then connect freely with the root servers; or two, create an alternative root zone that relies exclusively on IPv6 addresses.

Strategic implications

The Yeti project adopts the latter approach. Yeti attempts to tackle two problems which figure high on the Internet governance agenda of developing economies like India and China. The first is the efficiency of local networks which may experience connectivity problems if they are unable to reach the root servers. The second is the risk of surveillance: the operators of root servers, based mostly in the US and Europe, are able to look up DNS traffic from any part of the world.

But at its core, Yeti appeals to digital economies that are on the cusp of growth. The Digital India programme promises “universal” mobile connectivity < class="aBn" tabindex="0" data-term="goog_1940412712">< class="aQJ">in five years — 500 million Internet users, who will use multiple devices with IPv6 addresses, could go online in less than 10 years. Not surprisingly, IPv6 deployment has been a stated policy goal of the Indian government since as far back as 2004. It is also no surprise that ERNET, India’s biggest, government–owned university network, has joined the Yeti Project and operates a Yeti root server.

For all its technical motivations, Yeti has major strategic implications for Internet governance. The pioneers of Yeti have been careful to acknowledge the supremacy of ICANN but the project will bring about a fundamental re-engineering of the Internet. A “pure” IPv6 environment will remove the ceiling on the number of root servers. The global distribution of root servers segues well with India’s call for “inclusive and equitable” governance of critical Internet resources. If root servers are distributed globally, the formal and sole authority of the US government to approve changes to the root zone would be eroded over time. Strategic consequences aside, Yeti’s impact would be substantive and institutions like ERNET should continue supporting the project.

This commentary originally appeared in The Hindu.

The views expressed above belong to the author(s). ORF research and analyses now available on Telegram! Click here to access our curated content — blogs, longforms and interviews.

PREV

PREV